‘Dull Store Stuff': Small Language Models for Retail Sensing are Better for Brands Too

RETAIL TYPICALLY BREAKS in the boring middle. A late delivery fails to trigger a reorder. A promotion scans wrong for half a day. Search results pull up a wrong variant because the attribute data is a mess.

None of this is glamorous, but it matters greatly to the successful operation of stores and the success of the brands whose products they sell.

That’s why the most overlooked useful shift in AI for retail right now isn’t the race toward bigger, smarter language models. It’s the expansion of small language models or more accurately "small defined database LLM" to deal with the unexciting, repeatable problems that drive performance.

LLMs have been around for about 20 years, but in their early form they were just small, tightly controlled, database driven systems. What has changed isn’t the size of the data (it’s still limited and well defined), but the kinds of outcomes these models can generate.

By contrast, today’s huge generative AI models stem from the idea of giving them all the data in the world. That breadth creates a large, messy cloud of probabilities. That often makes their answers feel less consistent and statistically less reliable than the 1:1 precision of smaller, purpose built models.

Shop floor and backroom headaches

Most store and ecommerce problems are specific, operational and deeply contextual. Staff want to know why something didn’t arrive, what they’re allowed to substitute, or whether a product is meant to be ranged locally or centrally. Ecommerce teams spend time cleaning catalogues, fixing search logic, mapping supplier data that arrives in every format except the one requested. These are not questions that need a model trained on the entire internet. They need something trained on your rules, your data, your constraints.

Historically, this has been managed through machine learning – AI models designed to detect deviations from expected performance. When an operational process produces an anomaly, it is flagged, analyzed for root cause, and routed into systems designed to correct it.

What is changing now is the integration of broader AI capabilities. New solutions combine anomaly detection with tools such as computer vision and language models. Instead of simply identifying that something is wrong, these systems can interpret what the issue looks like, describe it in clear terms, and recommend what a corrected state should be. In other words, AI is evolving from flagging problems to helping define and guide the fix.

One example would be product images with copy and specifications that are poorly defined to criteria or lacking additional characteristics for use online or for in-store promotional signage. The new small language model combined with visual tools will identify the issue and provide real-time solutions to fix the issue.

The business case for task-specific AI

Walmart has spoken openly about this reality. Rather than relying on massive general models, it uses task-specific systems to support store associates with operational questions because speed, accuracy and reliability matter more than breadth when you’re standing on a shop. When a checkout line or queue is forming, nobody cares how eloquent the answer is. They care that it’s right.

Online, the same pattern shows up at scale. Search and discovery in ecommerce are full of small, uncelebrated jobs: fixing broken queries, interpreting vague intent, matching “a red t-shirt” to the right shade, size and brand.

When these processes fail the shopper, brands lose selling opportunities for their products.

Shopify’s engineering teams have written about using smaller, fine-tuned models to improve product understanding and merchant workflows without blowing up response times or costs. Instacart has made similar points in grocery, where slow or irrelevant search results show up immediately in basket abandonment.

There’s also a hard-nosed economic reason why this matters. McKinsey estimates that most AI value in retail will come from operational productivity, not creative use cases (The Economic Potential of Generative AI). The money comes from doing the dull stuff better and not in flashy demos.

Smaller models are cheaper to run, easier to control, and far less likely to introduce chaos into already fragile margins. It also explains why retail has been in bottom tiers of industries leveraging LLM based AI tools since they fail to address daily operational concerns.

Remember edge computing?

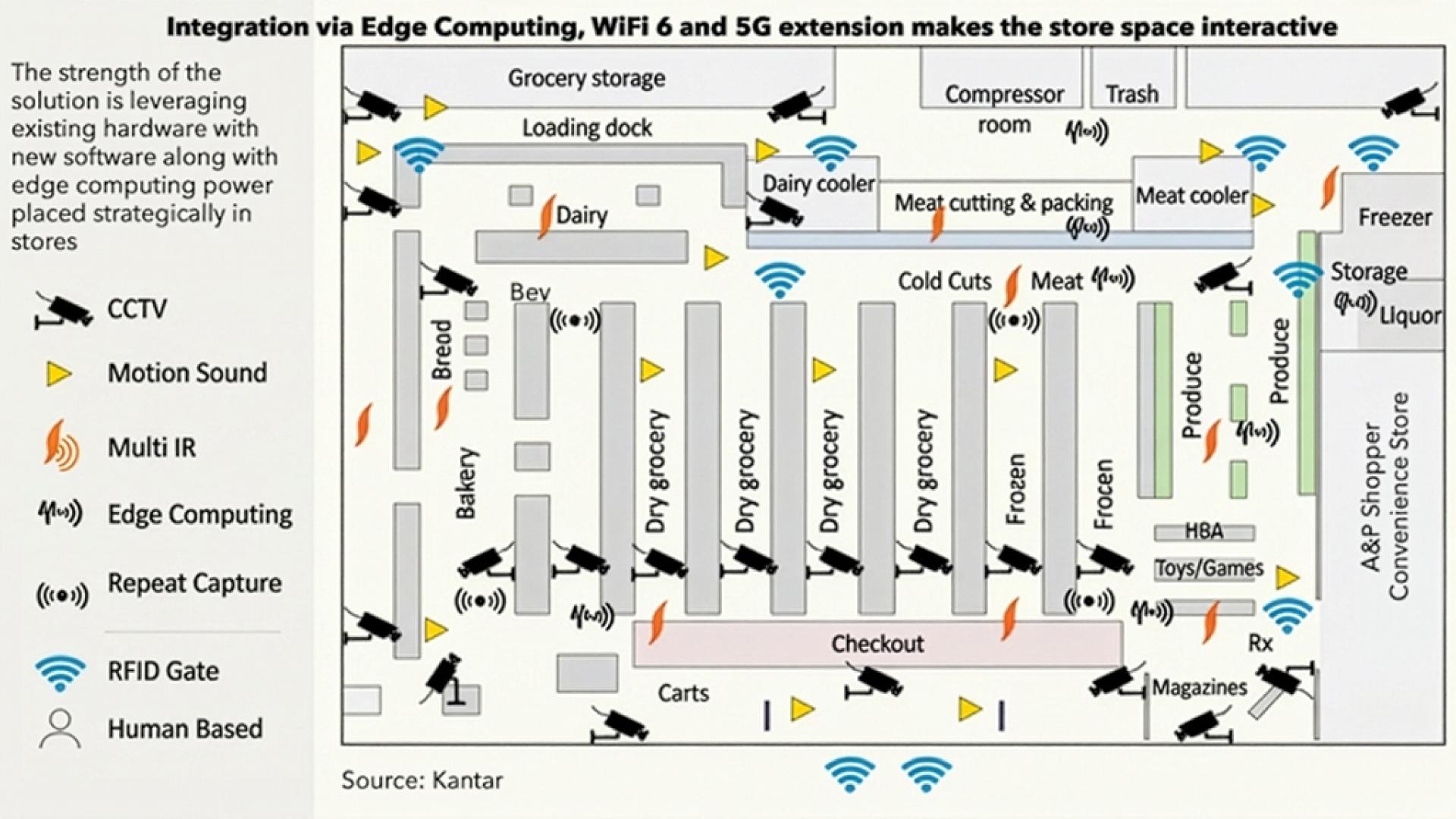

Stores are full of legacy systems, patchy connectivity and devices that were never designed for AI. Instead, the normal solution has been to different AI tools such as machine learning, deep learning, and even fuzzy logic in parallel but not integrated to complex and unique IT processes.

Expecting them to depend on constant calls to giant cloud models is optimistic at best. NVIDIA has highlighted how smaller, optimized models can run closer to the action, on local servers or edge devices, supporting decisions without relying on perfect connectivity (NVIDIA Retail AI briefings). Anyone who has watched systems crawl during peak trading understands why this matters.

Edge computing is ideal for retail because it brings processing power into the store, closer to where data is generated and decisions need to happen i.e. on the shop floor, at the shelf, and at checkout. In retail, things change fast. Prices, stock levels, fraud checks, queues, and losses all happen in real time, within seconds and not at the slow pace of sending data back and forth to the cloud.

Zero latency is the new goal to make the smaller LM effective and profitable

Speed and rising data volumes are opening entirely new opportunities. It’s not just about capturing and using more data, but integrating it seamlessly across the Internet of Things, which forms the backbone of many AI driven solutions. When signals and responses flow quickly and consistently across systems, processes become far more connected and efficient.

On the ecommerce side of omnichannel, this speed means new product information, pricing changes and merchandising options can be updated and surfaced to shoppers almost instantly, helping guide them toward faster, more confident buying decisions.

Zero latency means that all the back office and in-channel integration required to create that critical omnichannel experience would be functional and in-place. Edge computing places it far closer to the store staff and shopper.

There’s a trust angle too. Smaller models trained on tightly defined data sets are easier to understand and govern. They tend to behave more predictably. In retail, predictability beats cleverness every time. A pricing or promo mistake doesn’t stay internal for long; it’s on social media before the shift ends. For front-line employees, random errors attributable to an AI solution would mean purposefully avoiding its use.

None of this is anti-AI. It’s anti-fantasy

Dave and I have scar tissue from sitting in Monday trade meetings, walking stores after a messy promotion changeover, and dealing with the fallout of one corrupted pricing file or how shelf wobblers that were meant to communicate multi-buys were not put up during the busiest trading weekend. As we were sketching out this article, we agreed that retail performance rarely hinges on a single breakthrough idea but more on whether the basics held together that week. Brand success depends upon trading partner success.

Effective merchandising at retail has always come down to doing the basics properly: accurate prices, stock where it should be, promotions set up right, and stores that open and close without drama. Small language models don’t change the game; they just help teams stay on top of the details that usually slip when things get busy.

It’s the steady handling of those everyday pressures in retail that separates the good operators from the rest.

About the authors:

Paida Mugudubi is Head of Retail Insights, EMEA and APAC for Kantar Retail Practice paida.mugudubi@kantar.com

David Marcotte Senior Vice President Global Retail for Kantar Retail Practice David.Marcotte@kantar.com